Google unveiled TurboQuant recently:

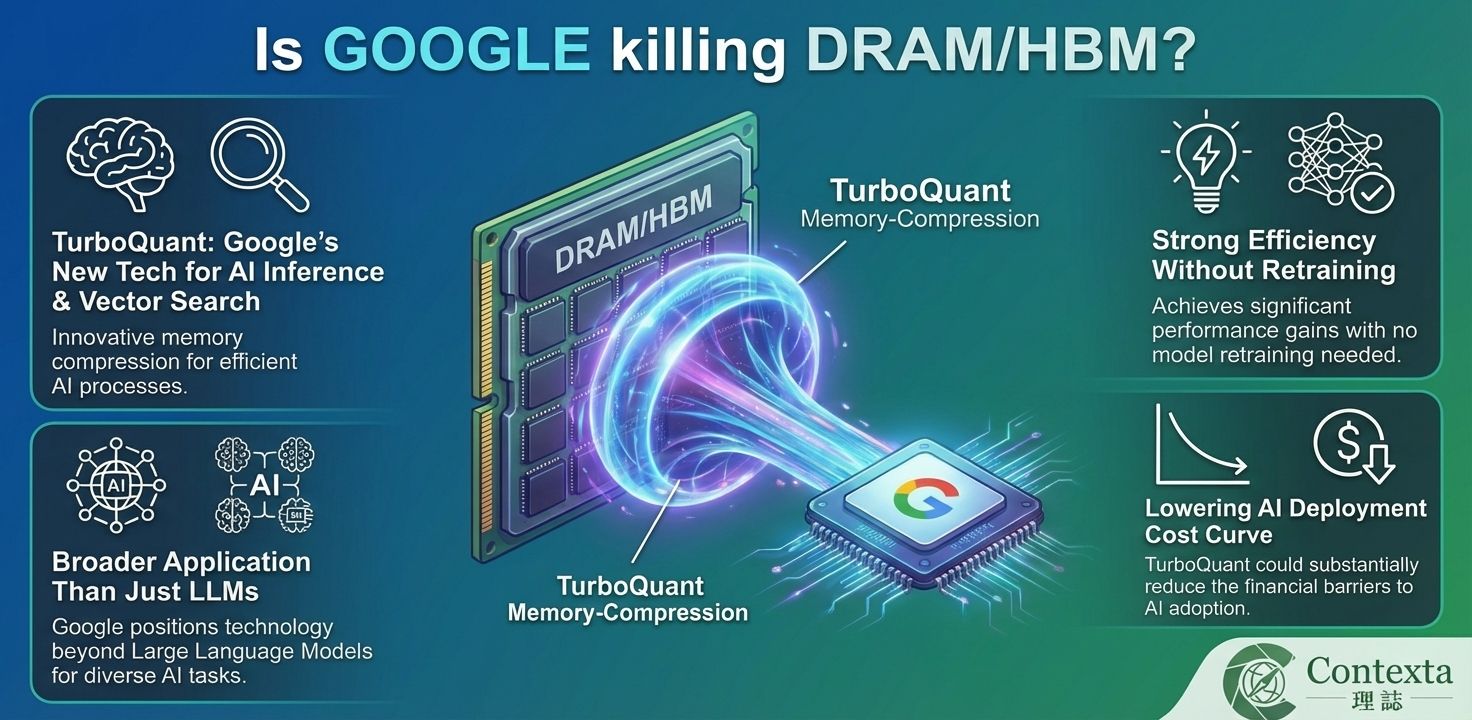

TurboQuant is Google’s new memory-compression technique for AI inference and vector search. It is designed to relieve the key-value (KV) cache bottleneck in large language models and large-scale vector retrieval systems, where high-dimensional vectors consume significant memory.

The main technical claim is strong efficiency without retraining. According to Google, TurboQuant can compress KV cache data to 3 bits without model training or fine-tuning, and tests on open-source models such as Gemma and Mistral showed about 6x KV-memory reduction. On NVIDIA H100 GPUs, Google reported up to 8x performance improvement versus unquantized keys.

TurboQuant works in two stages. It first uses PolarQuant to rotate data vectors for high-quality compression, then applies Quantized Johnson-Lindenstrauss (QJL) to reduce residual error and improve attention accuracy. Google says this helps address the extra 1–2 bits of memory overhead per number that traditional vector-quantization methods often introduce.

Google positions the technology as broader than just LLMs. The company says TurboQuant is relevant not only for KV-cache compression in AI models, but also for vector search engines. It has been validated on benchmarks including LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval. Google says TurboQuant is set for ICLR 2026, while PolarQuant is planned for AISTATS 2026.

From an industry perspective, the technology improves efficiency rather than eliminating hardware demand outright. The reported benefit is mainly at the inference-stage KV cache, not model weights or training memory. In practical terms, that means the same GPU can support much longer context windows or larger batch sizes before running into memory limits, instead of implying a simple 6x cut in total memory or hardware demand.

The bigger strategic takeaway is that TurboQuant could lower the cost curve of AI deployment. If inference becomes cheaper and more memory-efficient, more workloads may become economically viable, including longer-context reasoning, retrieval-heavy applications, and even some deployments on more local or constrained hardware.

Comment:

For me: it is more likely a medium-term driver than a structural threat for DRAM/HBM, though it can look like a short-term headline risk for memory names.

TurboQuant sounds negative for DRAM on the surface because the headline is basically saying AI can use memory more efficiently. When investors see phrases like “6x compression,” the first reaction is usually to think that future memory demand may fall. That is why this kind of news can feel like a threat at first, especially for memory stocks that have already rallied a lot on the AI story.

But the more important point is that TurboQuant is not reducing all memory needs across AI. Google is talking specifically about KV cache memory during inference, not the whole memory requirement of training or the entire hardware stack. In other words, it helps AI systems use existing hardware better, rather than making memory suddenly unnecessary. Google says TurboQuant compresses KV caches to 3 bits, delivered about 6x KV-memory reduction on some open models, and showed up to 8x speedup on H100 in certain tests.

That is why I would not see it as a true long-term bearish signal for DRAM. If AI becomes cheaper and easier to run, then more companies may deploy it, more applications may become practical, and overall usage may grow. So even if the memory needed for each individual workload becomes lower, the total demand for AI infrastructure can still rise because the number of workloads increases. That is the more constructive way to think about it.

Disclaimer:

The above content reflects personal views and market discussion only. It does not constitute any investment advice or recommendation to buy or sell. Investing involves risk, and readers should make their own assessments and bear responsibility for their own decisions.